1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

|

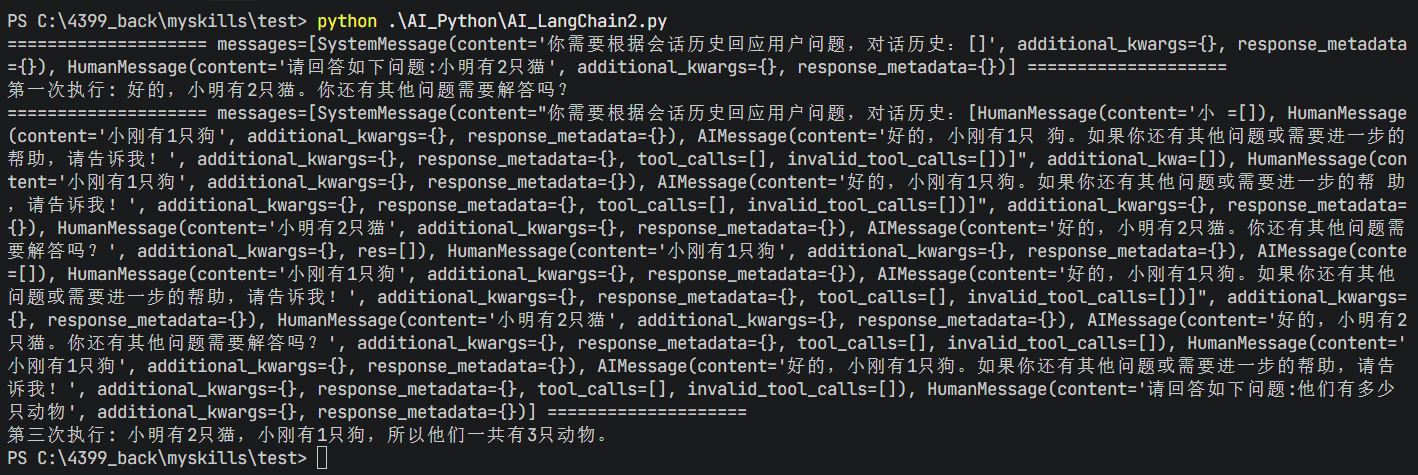

import json,os

from typing import Sequence

from langchain_core.messages import messages_to_dict,messages_from_dict, BaseMessage,message_to_dict

from langchain_core.chat_history import BaseChatMessageHistory

from langchain_core.runnables.history import RunnableWithMessageHistory

from langchain_core.chat_history import InMemoryChatMessageHistory

from langchain_core.prompts import MessagesPlaceholder , ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

from langchain_community.chat_models.tongyi import ChatTongyi

class FileChatMessageHistory(BaseChatMessageHistory):

def __init__(self, session_id, storage_path):

self.session_id = session_id

self.storage_path = storage_path

self.file_path = os.path.join(self.storage_path, f"{self.session_id}.json")

os.makedirs(os.path.dirname(self.file_path), exist_ok=True)

def add_messages(self, message: Sequence[BaseMessage]) -> None:

all_messages = list(self.messages)

all_messages.extend(message)

new_messages = [message_to_dict(message) for message in all_messages]

with open(self.file_path, "w", encoding="utf-8") as f:

json.dump(new_messages, f)

@property

def messages(self) -> list[BaseMessage]:

try:

with open(self.file_path, "r", encoding="utf-8") as f:

message_data = json.load(f)

return messages_from_dict(message_data)

except FileNotFoundError:

return []

def clear(self) -> None:

with open(self.file_path, "w", encoding="utf-8") as f:

json.dump([], f)

model = ChatTongyi(model="qwen-max")

chat_prompt = ChatPromptTemplate.from_messages([

("system", "你需要根据会话历史回应用户问题,对话历史:{chat_history}"),

MessagesPlaceholder("chat_history"),

("human", "请回答如下问题:{input}"),

])

str_parser = StrOutputParser()

def print_prompt(full_prompt):

print("="*20 ,full_prompt, "="*20)

return full_prompt

base_chain = chat_prompt | print_prompt | model | str_parser

def get_history(session_id):

return FileChatMessageHistory(session_id, "./chat_history")

conversation_chain = RunnableWithMessageHistory(

base_chain,

get_history,

input_messages_key="input",

history_messages_key="chat_history",

)

if __name__ == "__main__":

session_config = {

"configurable": {

"session_id": "user_001"

}

}

res = conversation_chain.invoke({"input": "他们有多少只动物"},session_config)

print("第三次执行:",res)

|